Privacy and DEI data: What to know

Hallie Bregman, PhD – Feb 1st, 2023

When employees opt to share their intimate details with you, they must trust that those details are protected. This article discusses the ways in which employers must demonstrate that DEI data is protected, via ethical commitments and legal regulations.

DEI is not a light and fluffy topic.

The deep inequality that marginalized communities have faced, the ongoing microaggressions and blatant harassment—these issues need to be taken seriously. These are the human experiences. The data aspect of DEI is just as serious. Data represents people, and we must protect and ensure that we act in the best interest of every employee.

Ultimately, DEI is dependent on trust. To do this work well, it requires that employees trust that their data will be private, protected, and used in their best interest. This introduces shaky ground, as data leaks, lack of transparency, and abuse threaten it all.

So what measures are in place to counteract these phenomena?

The ethics charter

When embarking on DEI analytics work, there is a critical component to get in place early: the ethics charter.

This charter defines the governance and guiding principles to using DEI data. Without it, decisions may be made without consideration for the best interests of the employee base.

There are several facets to a good ethics charter. We'll review a few below.

Confidentiality

First and foremost is confidentiality. When doing this work, data must be protected. But what does protected mean?

It means that data should not be used in any capacity that will harm the employee. If an employee self-identifies as gay but has not shared this information with their manager, and their manager has access to this information, the outcome is not favorable. If a hiring manager finds out that a candidate is Latino and opts not to move them forward in the process because of it, the outcome is not favorable. If an employee provides survey responses that are identifiable and the manager retaliates, the outcome is not favorable.

All of these situations require confidentiality to prevent misuse.

Confidentiality is the premise that information gathered will not be shared outside of a designated group. Typically, people analytics teams have access to the identifiable data, but no one outside of that team should have the ability to gather this information.

It is worth noting that confidentiality is different than anonymity, where there is no identifying information anywhere. A cost-benefit analysis should be conducted to determine if confidentiality is the right approach, but from a holistic perspective, identifying data can be critical to joining data sources to tell an end-to-end story.

Data represents people, and we must protect and ensure that we act in the best interest of every employee.

Data storage

Of course, protecting confidentiality is only as good as how the data is stored. Your ethics charter can and should detail your team's data storage practices.

If a server can be easily accessed, the data is not confidential. Remember the “old” days when you had to be careful printing something private, because others could easily see the print-out first? The same applies to a digital footprint.

Data should not be sent via email; instead, it should require encryption and password protection and a secure transfer process. This is where it is critical to get privacy and engineering teams involved. Robust data storage is a must.

Consent

Consent is another important facet of the ethics charter. Remember the Cambridge Analytica scandal? With appropriate consent, it could have been avoided.

Of course, there are many questions to be answered. The ethics charter must offer guidelines on who, how often, how, for what, and when consent is required. For instance, a key question: when employees share their identities with an employer, is consent for employers to use that data for analysis implied? Some organizations say yes, while others require more explicit consent.

While self-identification attributes may be used, do employees consent to using them in combination with other data sources, like performance or compensation? What about email and calendar data? If an employee grants consent, does that cover all analysis in perpetuity?

These are difficult questions, and by addressing them upfront in an ethics charter, decisions are clear and in the best interest of the employees.

Working with DEI data raises difficult questions. By addressing them upfront in an ethics charter, decisions are clear and in the best interest of the employees.

What are the results?

One of the biggest reasons that trust is eroded is a lack of transparency. To even grant consent in the first place, an employer must disclose how they intend to use the data. Employees then can make an informed decision if they want their information to be used in that way.

However, this can also lead to confusion about what employees can “know” in return. If an employee grants consent to use their data, does that mean they should also know the results of the analysis? It becomes unclear easily. This is where a partnership with a privacy and legal team can be instrumental in shaping the ethics charter. With experts in this domain, getting their guidance on risks and benefits to transparency is key.

More and more, companies are sharing data points publicly. However, there are still findings that put the company at risk; the question is then, is it in the best interest of the employees to keep those findings confidential? Or, despite risk to the company, do employees have a right to know the results?

The role of regulation

It may seem that the ethics charter is the only way to govern DEI analytics. It is not. There are also regulations that provide guardrails to protect the data.

Perhaps the most well-known regulation is GDPR, governing usage in Europe. There are a few key principles of GDPR known widely in the employee analytics community. First, data may not be collected without a business use case. A common tactic is to start data collection in the US, demonstrate the business value, and then expand data collection to the EU.

Second, an employee has the right to ask for their data to be deleted at any time. This means there must be a mechanism to remove all of the digital footprint of their information. If the data can be completely anonymized, those data points can be retained. Finally, consent is required. Revisiting the discussion above, consent is not just a nice-to-have. It is a must have.

In the US, SEC disclosures offer a mechanism of governance. While certainly less rigorous than GDPR, there are evolving requirements around company disclosures of human capital metrics.

Historically, companies were only required to report on gender and racial representation in their employee base; guidelines now suggest expanded disclosure of additional metrics. Most companies have not shifted to providing hard and fast numbers, but gradually have included more qualitative data. It is an evolution, expected to migrate to well-defined metrics over time.

Above all, trust

Fundamentally, none of this matters if employees don’t trust. They must trust their employers to use data responsibly. They must trust technology to keep their data safe. They must trust the government to appropriately regulate the use of this information. It all comes back to trust.

A few key tenets to building trust within an organization rely on transparency and communication.

If employees are in-the-know, they are much more comfortable sharing their data. For instance, with DEI surveys, often employees question what their manager can see. If communications are frequent, consistent, and clear, employees may develop the understanding that thresholds cannot be overcome, and a manager has no way to access identifiable responses

As a result, these employees are more likely to trust in the process and provide more honest feedback in that survey. On the flip side, if employees know who can know, they also gain comfort in the process. An example: it must be clear to employees that, in order to know turnover rates by race, one must be a Vice President at the company.

This type of transparency and communication is invaluable.

In conclusion

Privacy rules.

Conducting DEI analytics requires ethical rigor and a deep responsibility to protect the people. We cannot do this work without employees, and it is essential that we respect and protect their privacy every step of the way.

Thinking of measuring your DEI efforts? Learn why security is a key consideration when choosing to build or buy a measurement solution.

More from the blog

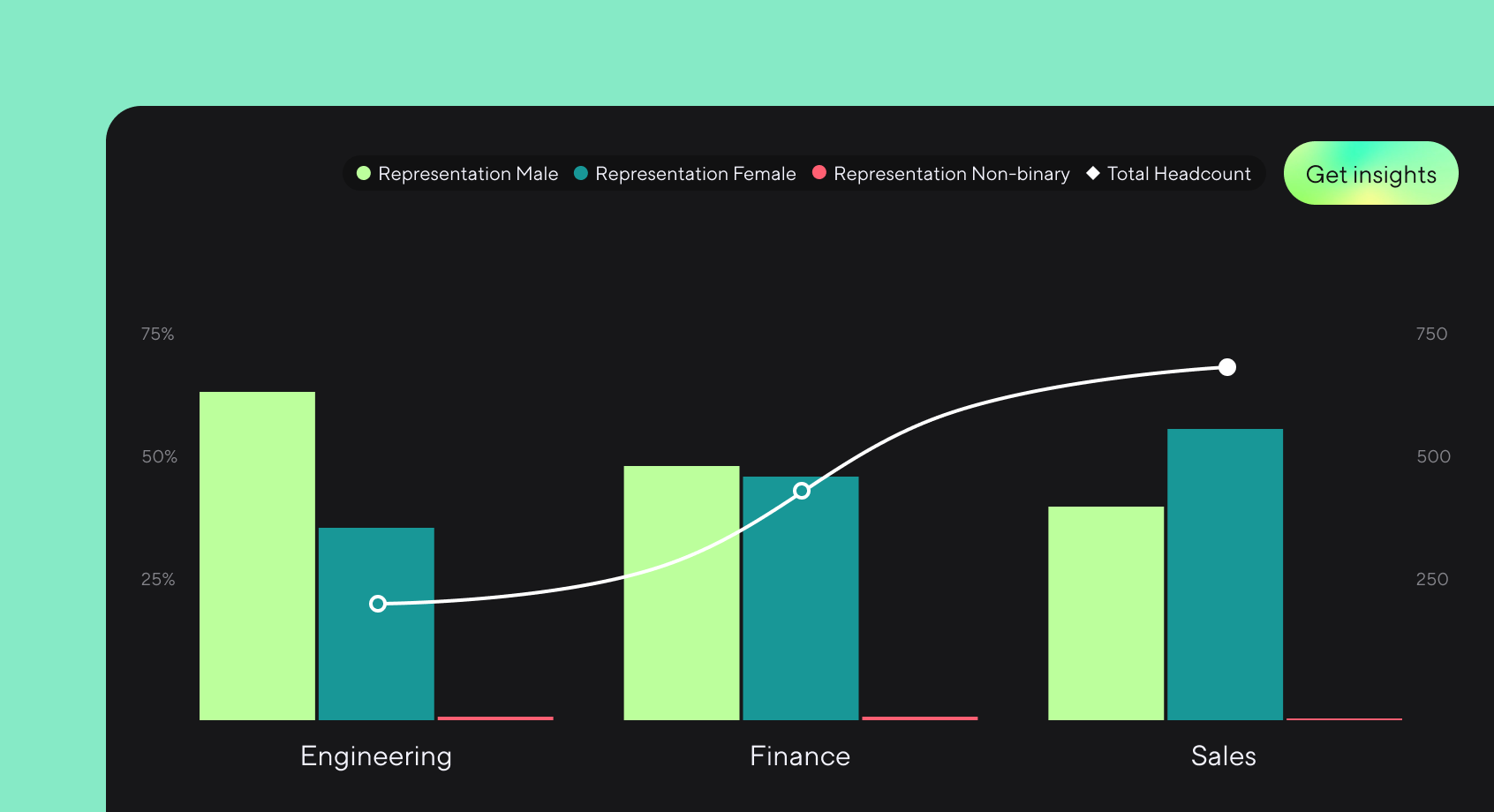

Announcing more powerful Dandi data visualizations

Team Dandi - Oct 23rd, 2024

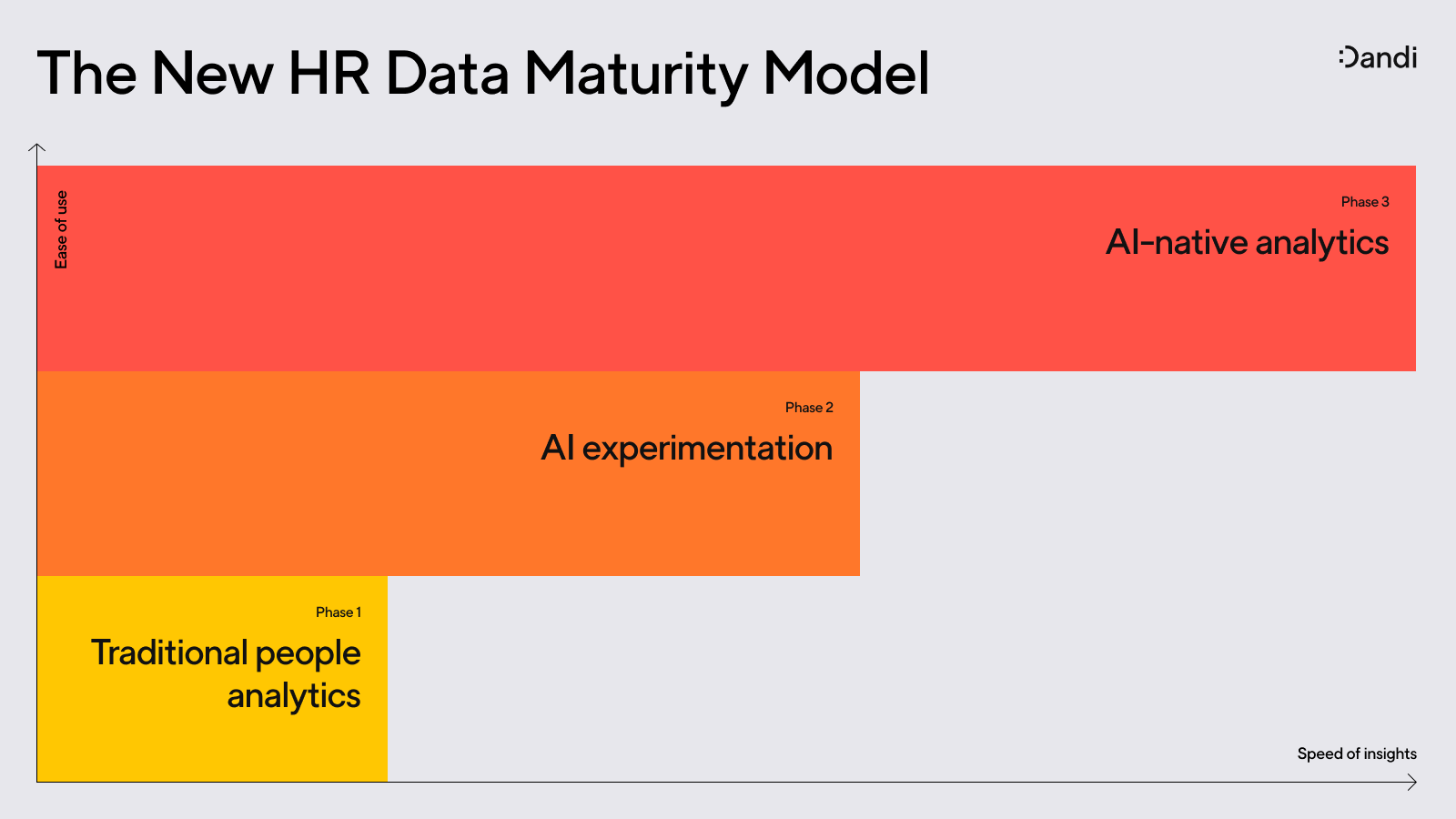

The New Maturity Model for HR Data

Catherine Tansey - Sep 5th, 2024

Buyer’s Guide: AI for HR Data

Catherine Tansey - Jul 24th, 2024

Powerful people insights, 3X faster

Team Dandi - Jun 18th, 2024

Dandi Insights: In-Person vs. Remote

Catherine Tansey - Jun 10th, 2024

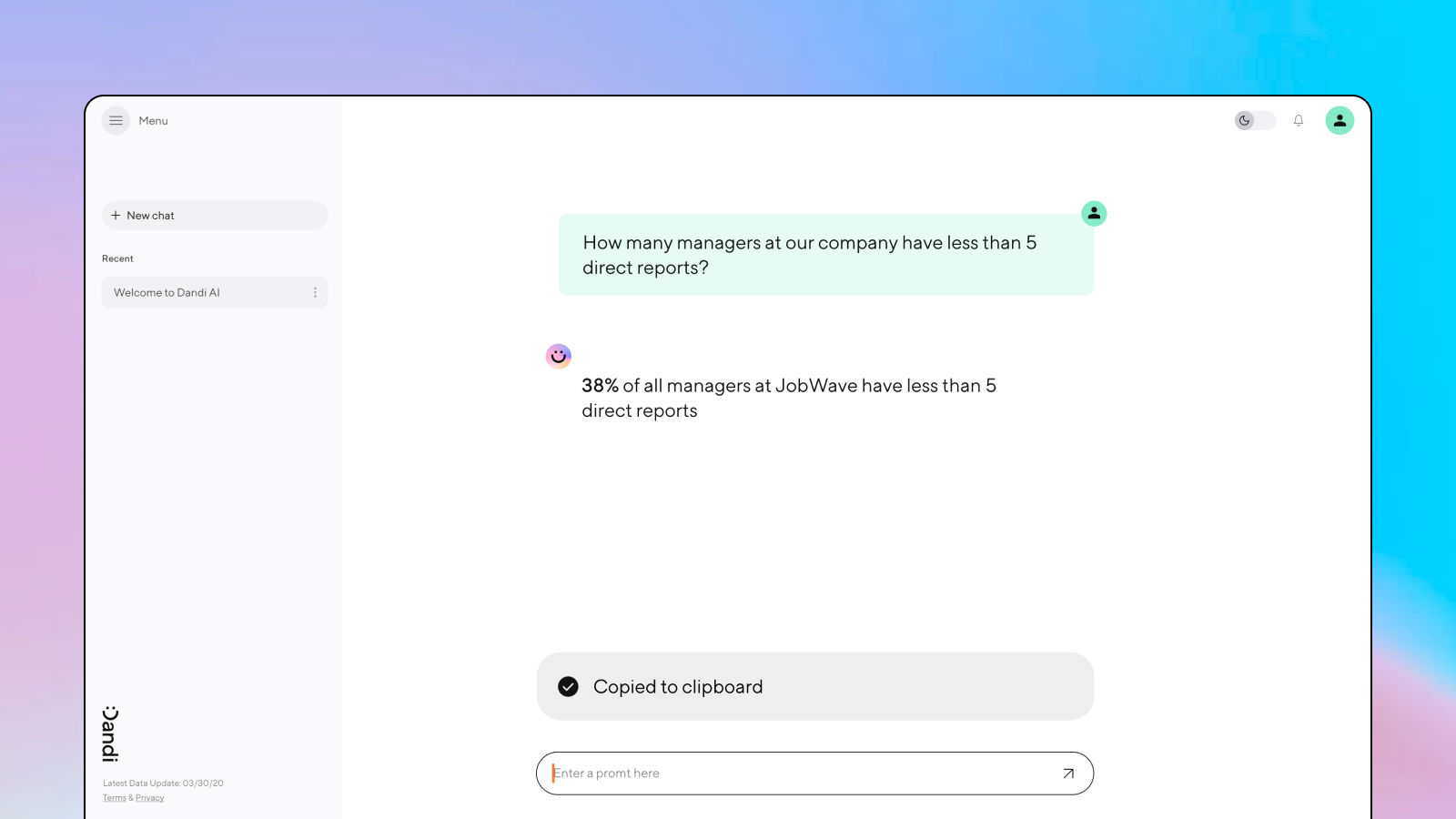

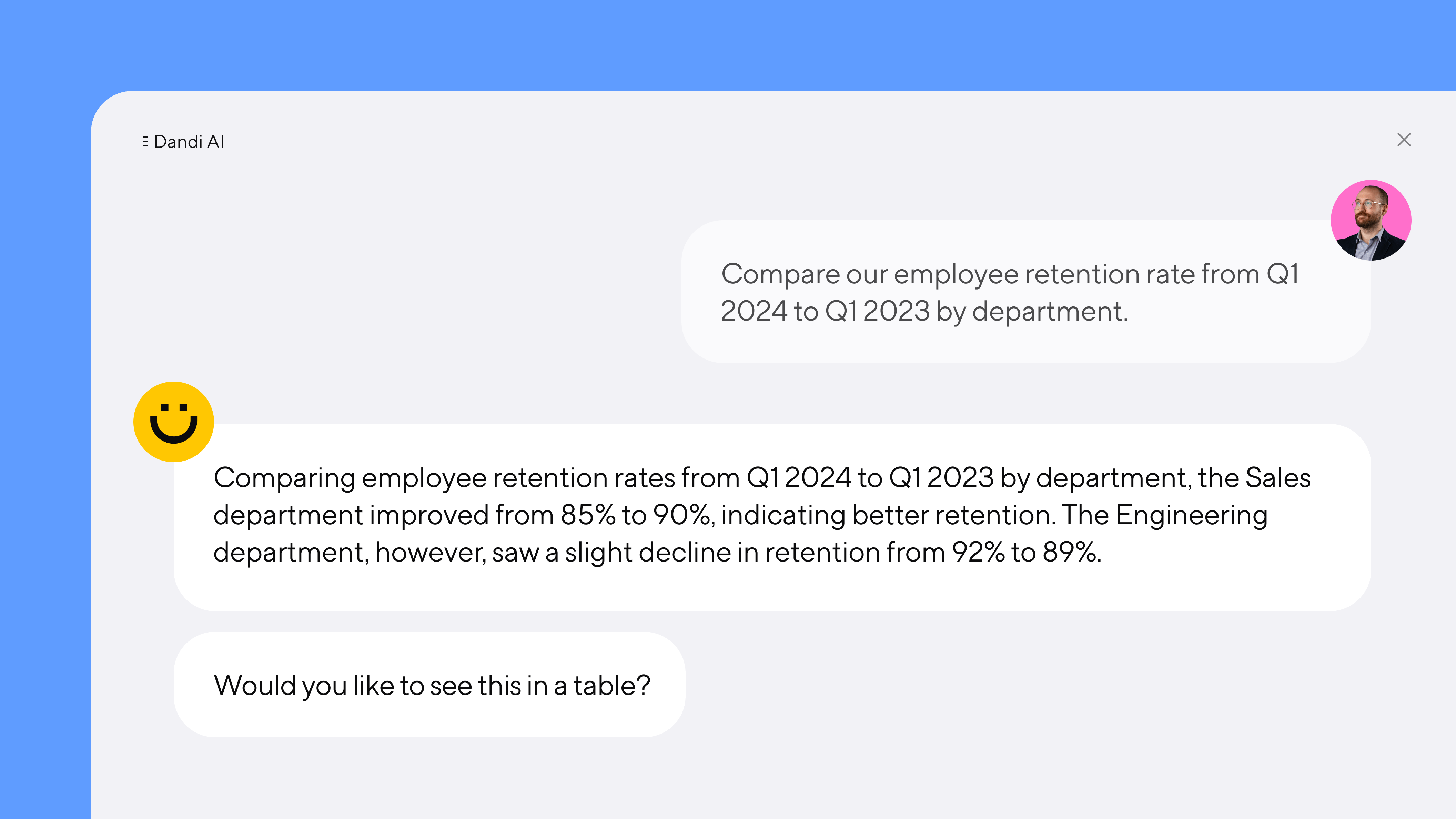

Introducing Dandi AI for HR Data

Team Dandi - May 22nd, 2024

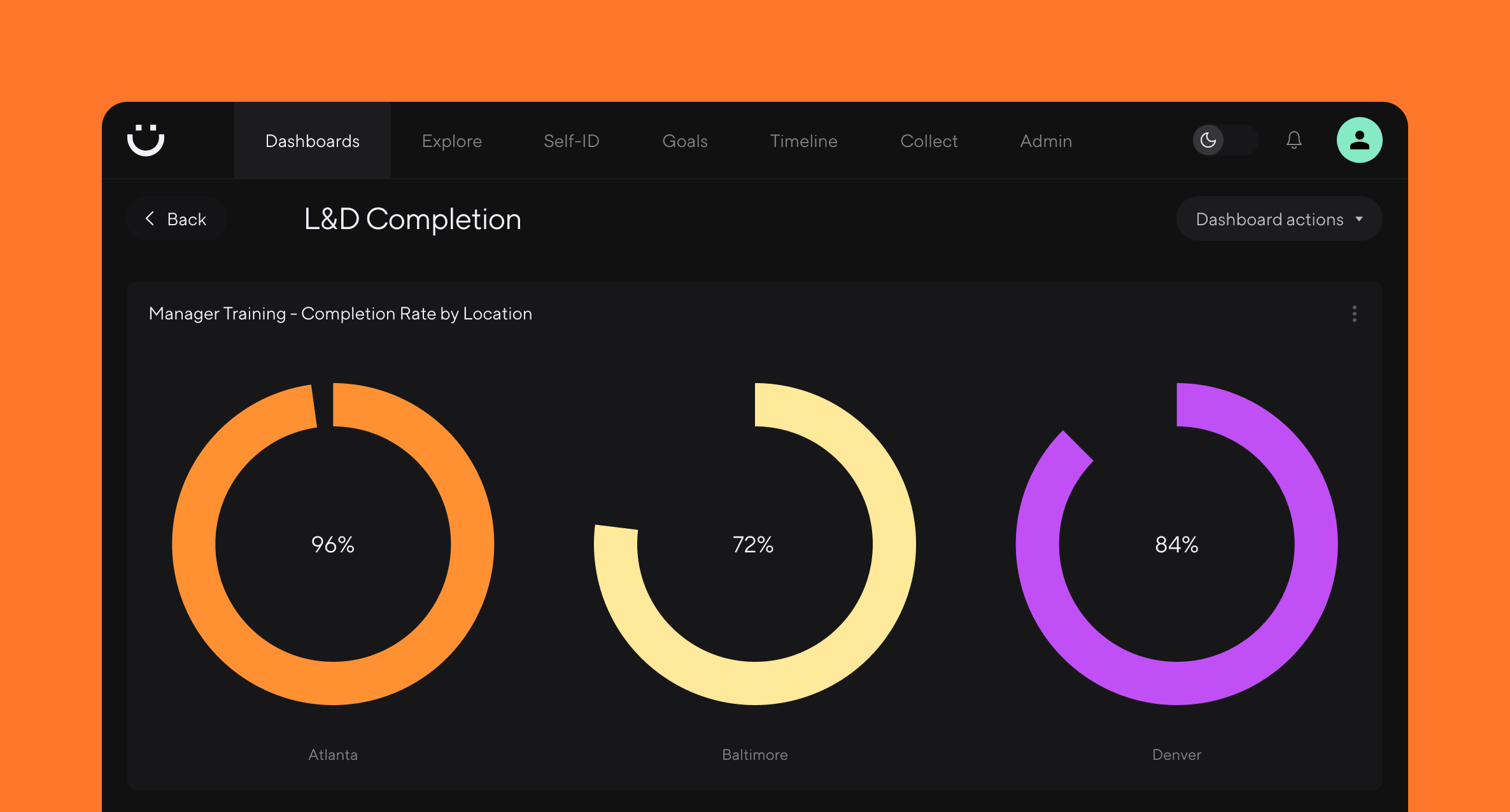

5 essential talent and development dashboards

Catherine Tansey - May 1st, 2024

The people data compliance checklist

Catherine Tansey - Apr 17th, 2024

5 essential EX dashboards

Catherine Tansey - Apr 10th, 2024

Proven strategies for boosting engagement in self-ID campaigns

Catherine Tansey - Mar 27th, 2024